In the ongoing pursuit of harnessing the immense potential of generative artificial intelligence (AI), the Google DeepMind team has tirelessly emphasized the parallel importance of developing robust tools to identify and counteract content generated by AI. At the heart of this mission is the imperative to tackle the escalating threat of deepfakes, those cunningly manipulated digital renderings that can convincingly mimic real people, situations, and events. Demis Hassabis, the CEO of Google DeepMind, has underlined the pressing nature of this challenge, particularly as the specter of yet another contentious election looms in 2024 in both the United States and the United Kingdom. This underscores the urgency of refining systems to discern and flag AI-crafted visual content.

After years of meticulous development, the DeepMind team has announced their latest creation – SynthID. This innovative tool, unveiled at Google's Cloud Next conference, is engineered to subtly watermark AI images in a way that remains virtually imperceptible to the human eye but is readily detectable by dedicated AI-powered scanners. The watermark is intricately embedded within the image pixels, an addition that, according to Hassabis, doesn't disrupt the image's visual integrity, quality, or the viewer's experience. Hassabis elaborates that the watermark's resilience to various alterations such as cropping and resizing sets it apart from traditional, easily circumventable watermarks. As DeepMind continues to refine the underlying models of SynthID, the watermark's imperceptibility to humans is expected to further increase while its detectability by AI-based detection tools will be further enhanced.

The mechanics of the SynthID watermarking technique remain somewhat cloaked in secrecy. Hassabis highlights the prudence of withholding intricate details about the system's inner workings, as increased transparency could inadvertently empower malicious actors to devise workarounds. SynthID's initial deployment is geared towards Google's ecosystem, with Google Cloud customers who utilize the Vertex AI platform and the Imagen image generator granted the ability to seamlessly integrate and identify the watermark. As the tool garners real-world exposure, Hassabis envisions iterative improvements and wider implementation, accompanied by a more comprehensive disclosure of its operational mechanisms.

Looking ahead, Hassabis envisions a future where SynthID sets the standard for authenticity across the internet. The technology's foundational concepts could conceivably extend beyond images to encompass other media like video and text. While acknowledging SynthID's current status as a beta test, Hassabis is optimistic about its transformative potential, albeit without regarding it as an all-encompassing solution to the deepfake predicament.

Yet, Google isn't the solitary contender harboring such ambitions. A recent coalition involving Meta, OpenAI, Google, and other major AI players committed to reinforcing safeguards and security systems for AI technologies. The C2PA protocol, which employs cryptographic metadata to label AI-generated content, has also garnered attention from several corporations. Google's endeavors in AI-based detection appear to be part of a broader trend, and while multiple standards might emerge, Hassabis expresses confidence that watermarking could significantly contribute to addressing the proliferation of deceptive AI-crafted content on the web.

SynthID's unveiling aligns strategically with Google's Cloud Next conference, where business clients are apprised of the latest enhancements in Google's Cloud and Workspace offerings. The burgeoning popularity of the Vertex AI platform, marked by increasingly sophisticated models, coupled with the advancements in SynthID, prompted the decision to introduce the technology at this juncture.

Thomas Kurian, Google Cloud's CEO, underscores the multifaceted concerns of clientele, which encompass not only the looming threat of deepfakes but also more mundane AI detection requirements. Customers deploying AI tools for tasks like crafting ad copy or generating product descriptions yearn for mechanisms to verify the authenticity of the content they manipulate. This extends to sectors like retail, where AI-powered product description generation necessitates meticulous differentiation between genuine product imagery and AI-generated visuals utilized for brainstorming and creative refinement.

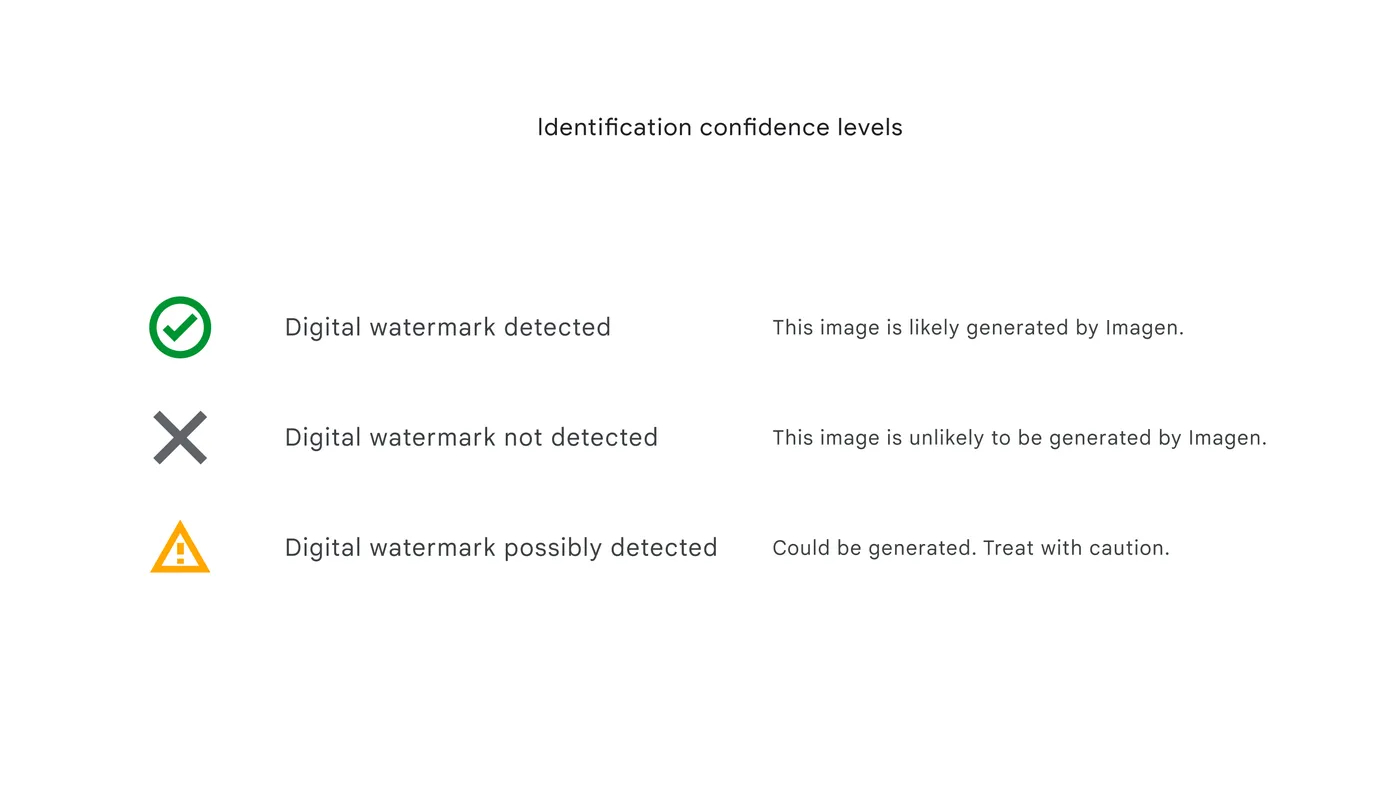

As SynthID commences its rollout, Kurian's attention is captivated by its practical implementation. He envisions integrations with tools like Slides and Docs to ensure the provenance of sourced images. Hassabis contemplates even broader horizons, suggesting that SynthID could evolve into a Chrome extension or browser-integrated functionality, capable of discerning AI images scattered across the web. The challenge arises in determining the optimal user experience – Should SynthID proactively highlight all AI-crafted content or respond to user queries? The visual cues signaling AI involvement also merit consideration: from attention-grabbing indicators to subtler cues, the UX spectrum remains open-ended.

As the SynthID era dawns, this introduction inevitably marks the inception of a technology arms race. The history of AI advancement has showcased the perpetual game of cat and mouse, as security measures are unveiled and hackers endeavor to outwit them. Google DeepMind is acutely aware of this challenge and accepts the ongoing evolution of SynthID as a necessity rather than an exception. Hassabis concedes that vigilance akin to antivirus software is crucial – an ever-alert stance against evolving attacks and transformations. Presently, Google wields control over the entire lifecycle of AI image creation, deployment, and detection. However, the grander ambition is to extend SynthID's influence across the expansive realm of the internet, a journey that Hassabis embraces with caution, emphasizing the foundational proof-of-concept stage as the immediate focus. The implications for an AI-authenticated online existence can only be truly explored when this foundational piece of technology demonstrates its mettle.

So, the advent of SynthID marks signals Google's proactive engagement with the challenges posed by deepfakes, and while not a panacea, the technology showcases promising potential. As the technological landscape continues to evolve, Google's journey towards bolstering online authenticity will likely inspire a broader discussion about AI's role in shaping our digital lives.