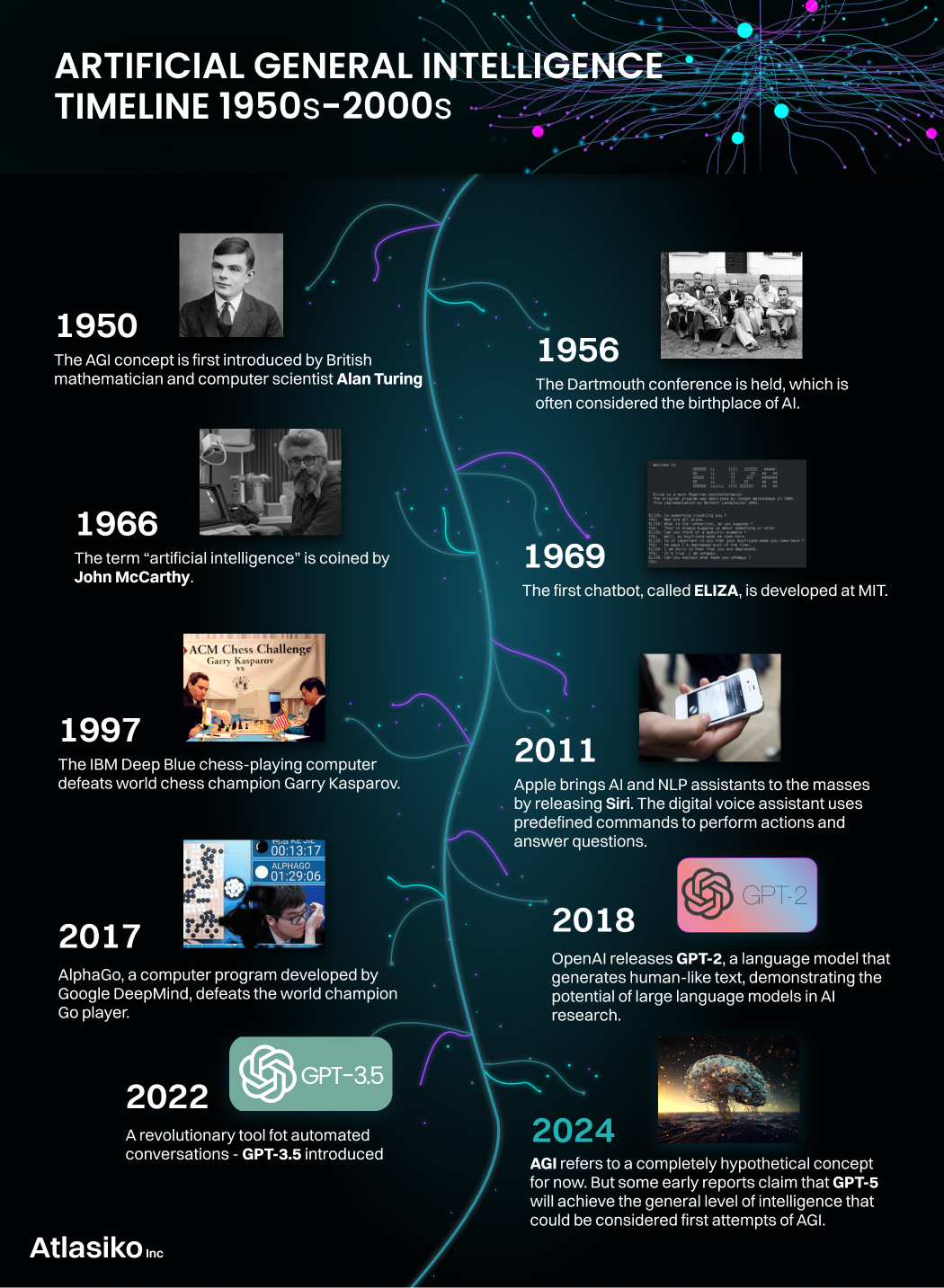

The recent blast in the development of AI has brought many thoughts and issues to the surface. After the world witnessed how capable this technology can be, all of a sudden, the science fiction plots about androids with intelligence that rival humans don’t seem impossible anymore. Some experts state that the first steps to creating the next generation of AI – artificial general intelligence – have already been taken.

We’ve decided to do our own research on the topic of AGI (artificial general intelligence), the actual state of its development, characteristics, and predictions. Of course, Atlasiko shares our analysis of the AGI meaning with you to answer the popular question “What is AGI in AI?”. Read ahead not to miss the significant transformation happening in the tech industry which can impact the whole world.

What is AGI?

To start with our explanation, let’s give a comprehensive AGI definition. So, artificial general intelligence is a term used to describe an intelligent agent with human-level cognitive abilities within the software. In other words, it’s an AI that reached the level of development to be able to solve any unfamiliar issues and tasks on par with humans. Some other specialists define AGI as a system that works autonomously and exceeds ordinary people in economically valuable tasks.

Apart from two variants of artificial general intelligence definition, it also has a few names. The system can also be called general artificial intelligence as well as Strong or True AI. In some papers, you can come upon the name “real artificial intelligence”.

What’s AGI AI's main concept?

The fundamental concepts that characterize AGI meaning in AI are “intelligence” and “consciousness”. To be considered AGI, the next-level AI has to obtain artificial cognition similar to or even the same as the natural one of humans. Just like our minds create new neuron connections living through experiences, learning, and solving, artificial general intelligence has to develop new links in its system and act on them resembling a conscious thinking process. While the intelligence concept is rather clear meaning cognitive capabilities, there are different points of view on the “consciousness” statement.

Naturally, the development of AI to more progressive stages gets the attention of not just computer science specialists but also philosophers who study the philosophy of mind and human existence. Thus, they present their own perspective on what the AGI system might be. The hypothesis about general AI suggested by an American philosopher, John Searle, gives us two AGI definitions that address the consciousness concept.

Strong AI vs Weak AI

| Strong AI | Weak AI |

|---|---|

| The AI system acts upon its subjective experience producing human-level thought processes and making conscious decisions, which cannot be tested in typical ways. | The AI system only replicates the behavior of human minds, pretending to have consciousness as a major cognitive quality, but cannot actually process its subjective conscious experience. |

In Searle’s “Chinese room argument”, he actually theorizes that it’s impossible for AI to really become “strong” in the sense of this particular hypothesis and obtain a human-like mind. The maximum that we’ll achieve is exactly weak AI which means just a program with generally intelligent behavior.

At the same time, computer scientists, like Stuart Russel and Peter Norvig, set philosophical hypotheses aside saying that the main aspect that should be evaluated is the outcome. It doesn’t matter if AI just pretends to think or actually thinks like a person as long as it gives the expected results. Therefore, the debate about whether artificial general intelligence is required to have real consciousness is still going on.

Even though modern science has been developed to the point where we can make artificial organs and body parts, we still can’t replicate the mental part of our existence. So, general AI is basically an attempt to reproduce minds granting human-like intelligence to machines.

Perhaps, even from this brief answer to “What is AGI?” you can tell that the idea is rather controversial. Indeed, some find it fascinating while others say it’s outright creepy. There are many dimensions to the topic, as well as thoughts on it, which we address further in the article.

What is AGI artificial intelligence capable of?

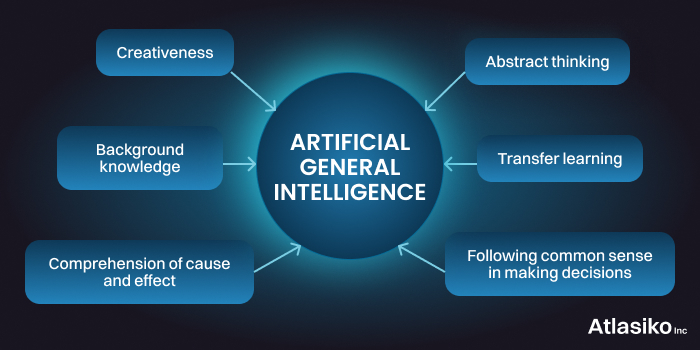

As AGI intelligence is still a hypothetical system, there’s no way to know the full extent of its capabilities. However, there are certain characteristics that indicate true AI distinguishing it from other forms. We’ve already mentioned that one of the fundamental requirements of AGI is to be able to perform cognitive computing in a way indistinguishable from humans, but, of course, there’s more to it. As scientists developed different approaches to achieving general artificial intelligence and perspectives on the evaluation, they outline various capabilities associated with the system.

In theory, a completed AGI system is thought to be able of:

- abstract thinking;

- following common sense in making decisions;

- comprehension of cause and effect;

- creativeness; background knowledge;

- transfer learning.

Some scientists also add such typical for human cognitive qualities as sentience, imagination, motivation, social intelligence, and reasoning, but they aren’t considered fundamental

Apart from these abilities, there is a set of functional features that the AGI computer must have in order to operate autonomously. The essential practical side of capabilities includes sensory perception, a sufficient level of motor skills, natural language understanding and processing, and a navigation system.

Researchers believe that AGI systems will be able to perform higher-level tasks, such as the following:

- utilize multiple learning methods and algorithms;

- comprehend belief systems,

- utilize miscellaneous types of knowledge,

- produce definite structures for tasks;

- comprehend symbol systems,

- engage in metacognition, and utilize knowledge on its basis.

AGI vs AI difference

In order to give you more understanding of just how revolutionary achieving AGI might be, let’s compare it with the technology that we can experience now – artificial intelligence. Exactly this technology and its recent advancement urged scientists to activate the discussions and research about true artificial intelligence. Although both systems are based on similar algorithms and principles, the AI vs AGI difference is actually tremendous.

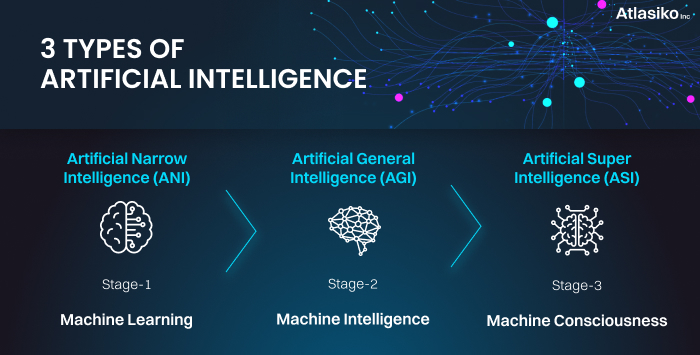

Researchers refer to the artificial intelligence we know and use now as Narrow AI (and weak AI in the mainstream artificial intelligence science). The name is basically self-explanatory as the system is only capable to carry out a specific, “narrow” set of tasks.

Contrary to narrow AI, AGI in theory doesn’t have any limitations in capabilities. It’s supposed to be able to handle any unfamiliar problem and have knowledge in various areas.

Narrow AI vs General AI vs Super AI

Down below we compared the two types mentioned above, general AI vs narrow AI, as well as the superior to them stage of AI – super AI.

| Artificial narrow intelligence | Artificial general intelligence | Artificial super intelligence |

|---|---|---|

| A narrow range of abilities according to the algorithms written by a developer | Can make decisions in unknown circumstances without training (display of general intelligence) | Far exceeds the capabilities of even the most gifted humans in basically everything |

| Completely dependent on the dataset it was trained on in task execution | Can perform any task that a human is capable of which broadens the range of capabilities | Has the capacity of perfect recall, can multitask with top-level efficiency, operates superior knowledge base, etc. |

| Can exceed human capabilities only in a specific task it was created for | Its processes and outcomes are indistinguishable from human ones (passed the Turing test) | Basically is a new species with exceptional cognitive characteristics |

AGI development approaches

Although AGI artificial intelligence is still a hypothetical concept, the greatest minds of computer science have already been working on possible methods and ways to achieve this technology. After conducting a meticulous analysis, we chose the most popular approaches to AGI development.

Brain emulation

One of the possible and most debatable approaches is human brain emulation. It can be done by thorough scanning of the human brain, mapping, and uploading it on a capable computational device. Despite sounding quite futuristic, the appropriate hardware for simulating the brain actually exists in the present. According to Raymond Kurzweil, an American computer scientist, the sufficient volume of calculations per second to simulate our brain is 10^16, while the world’s fastest supercomputer (as of March 2023), Frontier, is able to perform 10^18 calculations in 1 second. Of course, due to the massive size and uniqueness of this computer, it’s not accessible for experiments just yet and clearly cannot be used in a commonplace. Moreover, Kurzweil’s estimates don’t include the fact that the majority of exciting artificial neural networks use simplified models of biological neurons. To fully simulate the human brain with all characteristics would require more computational capacity.

Another problem is the scanning process. The human brain remains to be fully discovered since even with centuries of research there are still dark spots for scientists. The most popular suggestion is to use special nanobots that will accumulate accurate data about brain functioning but even then scientists won’t have a guarantee that the bots were able to capture all peculiarities. Therefore, to ensure successful brain emulation for achieving general AI, researchers still have to spend at least two more decades developing the required technologies.

Algorithmic probability

Another approach to achieving AGI is based on the theory of algorithmic probability introduced by Ray Solomonoff. According to the method, the intelligent agent is able to predict the environment and decide on the best action even when given unfamiliar circumstances using the smallest dataset of environmental observations (Solomonoff’s induction) and the possibility of an event based on prior knowledge of conditions related to it (Bayers’ theorem).

As a continuation of this approach, a DeepMind senior scientist, Marcus Hutter created a mathematical theory of artificial general intelligence – AIXI. It’s a theoretical reinforcement learning agent that also uses Solomonoff’s induction to choose the best possible action based on observations and rewards from the environment.

Despite the sound theoretical proof of both models, they are believed to be incomputable in practice, which means it’s impossible to create an accurate algorithm to always solve the problem correctly. Currently, there are a few approximate to artificial general intelligence examples like AIXItl and UCAI, however, they have a major drawback in terms of computation time which makes the models inefficient in practice. Many researchers now consider the AIXI model a benchmark for artificial intelligence AGI capabilities as it’s a mathematically proven functioning AGI.

Integrative cognitive architecture

This method of AGI development is based on the idea of replicating identified central cognitive processes of the human brain individually within AGI technology. The approach to AGI software named CogPrime was first introduced by Ben Goertzel (OpenCog).

The CogPrime system uses an action-selection module to determine the best course of action in a scenario while simulating the cognitive processes of the brain to detect information about its surroundings. This enables it to produce an intelligent model and subsequently an AGI program. The disadvantages of this paradigm include the requirement for proper memory type separation as well as the need for system-wide synergy in order to produce an efficient computing environment. In comparison with previous approaches, CogPrime was able to overcome the incomputability issue as most technologies for its implementation are available now, but the system's capabilities are much below human brains. Thus, at this stage of development, it can’t be considered true artificial intelligence.

Challenges in the development of AGI technology

Insufficient technology and great energy consumption levels

We’ve already mentioned that present-day technologies can’t execute cognitive operations on a human level. Even the most powerful existing supercomputers could provide just the sufficient capacity to replicate human mind calculations, not to mention multitasking and other complex processes our brain is capable of. Moreover, even recent AI releases came across the problem of enormous energy consumption. Therefore, to create an efficient real artificial intelligence people need to solve many other technologies and resource-related challenges.

Replicating Transfer Learning

Applying information gained in one domain to another is referred to as transfer learning. Humans regularly engage in this, and it is a significant aspect of society. For instance, we can learn how to use a foreign language word in class and apply this knowledge to make a sentence with it at home. The main aim of replicating transfer learning is to prevent retraining, so a capable AGI artificial intelligence could use one skill for solving tasks in different fields

Facilitating collaboration and common sense

Human functioning depends on both common sense and teamwork with other human beings to complete tasks. Since today's algorithms are so limited in scope, dependable teamwork and common sense are yet far off in the future. The system must be endowed with these qualities to ensure that it is a true general artificial intelligence and not just another niche AI.

Understanding Mind and Consciousness

As we defined above, consciousness is one of the main concepts and most reliable ways to prove the existence of general intelligence as it’s an essential component of human existence. However, even we, humans, can’t fully grasp all the secrets and peculiarities behind our minds. Thus, it continues to be a substantial barrier to the development and realization of general artificial intelligence.

We offer impeccable expertise and a wide range of efficient solutions for your business development

Future of artificial general intelligence

After getting to know more about AGI, we can state that the question “Is artificial general intelligence possible?” isn’t a matter of doubt anymore. The answer is clearly positive as the scientists dedicate their full attention to the development of true AI. Now researchers pose another question – “When will we have artificial general intelligence?”, and let’s admit, the predictions are ambiguous.

For example, a famous Australian roboticist, Rodney Brooks, concluded that a functional AGI system won’t be implemented till 2300 saying that present-day science is far from understanding “the true promise and dangers of AI”.

His statement was supported by remarkable researchers, such as Geoffrey Hinton and Demis Hassabis, who said that general artificial intelligence is nowhere close to being implemented.

Atlasiko has developed a sketch to render ai free for Applet3D that converts sketches to one-click renderings, aimed primarily at architects, interior designers, and real estate professionals. This intuitive web-based solution allows users to effortlessly convert architectural sketches (JPG, PNG, TIFF, WebP) into photorealistic renders — all in seconds and completely free of charge.

However, there’s also another point of view expressed by a Canadian computer scientist, Richard Sutton, who evaluated the possibilities of developing general intelligence AI in a span of the next two decades. He specified a 25% possibility of understanding AGI technology by 2030, a 50% chance that it’d happen by 2040, and only 10% – never.

According to our research, software development specialists in Atlasiko also tend to think that artificial general intelligence won’t arrive sooner than at the end of this century or even the next one. Although we have great theoretical advancements, modern science still has too many obstacles to overcome to implement AI with general intelligence in real life.

Risks from artificial general intelligence

Reading this article you probably remembered some fictional scenarios from popular movies where intelligent robots take over humanity. Well, it’s pretty logical as those plots are based on real scientific concerns. Evaluation of existential risks takes a great place in the general AI dispute.

Even now, the rapid advancement of artificial intelligence causes many discussions and controversies as it impacts various industries and a global workforce marketplace. The development of artificial general intelligence will alter the whole world tremendously. If we’ll manage to achieve true AI with human-like consciousness, there’s no guarantee this technology will be willing to be managed by humans. To put it simply, scientists now can’t tell if we’ll get a friendly R2-D2 or an android rebellion. Exaggerations aside, let’s take a look at some risks most discussed among experts.

- Laking control. The brightest minds of the scientific community such as Stephen Hawking, Stuart J. Russel, Frank Wilczek, Geoffrey Hinton, OpenAI’s CEO Sam Altman, and others addressed the lack of attention to the control over artificial intelligence. Without proper management and monitoring strong AI can simply be misused causing major disruptions and damage to society.

- The AI alignment problem. The more advanced the AI system is, the more challenging it can be to align its goals with human ethics. With the development of stronger cognitive abilities, true AI may be able to build strategies misaligned with intended goals and principles, for example, power-seeking. Such behavior has already been noticed in some reinforcement learning agents when they displayed instrumental convergence (more capable agents used their bigger capacity of power to achieve their goals, which is similar to what humans do). Therefore, before deploying any AI it’s vital to ensure the alignment of objectives.

- An issue with specifying goals. For each intelligent agent, the utility function is specified by the human developer. Writing this function correctly is utterly important as it defines the set of values which would be the basis for AI’s decisions. So, if some important values happened to be not added to the utility function description, the general intelligence would act upon its own assigned tasks despite possibilities of harm or damage.

- Challenging goal modification in AI AGI. More advanced technologies such as artificial general intelligence might resist changes in their goal structure to ensure their continued existence and even oppose being shut down.

Undoubtedly, to ensure the safety and stability of human society, scientists have to think through all risks and preventive mechanisms.

General AI Myth or Fact

| Myth | Fact |

|---|---|

| The development of real artificial intelligence is impossible. | Artificial general intelligence is still a hypothetical intelligent agent. Indeed, it’s predicted to be implemented in the future. |

| General artificial intelligence has already been developed. | Although there are some approximations, none of the modern AI technologies can’t be considered generally intelligent as they don’t possess the required qualities. |

| Threats from artificial intelligence aren’t real. They are just plots from science fiction. | Many greatest scientists and inventors express concerns about the lack of control over general AI and the possible dangers it might bring to humanity. |

| Strong AI with consciousness can become evil. | AI can’t “turn evil” in the same meaning as humans. Whether conscious or not, the real problem is probable misalignment with our objectives. |

| Goals of general artificial intelligence can only be determined by humans. | Contrary to narrow AI, general AI with advanced cognitive qualities can display behaviors different from determined goals based on subjective experience. |

| The main threats are robots and androids. | A misaligned AGI AI doesn't need to have a movable body (or even any body) to be able to cause damage. The only requirement is an Internet connection. |

| AI development will inevitably lead to technology surpassing humans and the downfall of our civilization. | With strong regulations and a well-thought risk strategy, the development of an advanced AGI system will cause no harm and benefit the overall technology development. |

Conclusion

We hope that this article helped you to gain a better understanding and find a comprehensive answer to the question “What is artificial general intelligence?”. Achieving AGI will be an exceptional accomplishment in computer science and other related industries. However, we can’t bring down our cautiousness with such a powerful technology. Without proper control, it might bring negative changes and danger to society.

If you want to find out more about the positive aspects of present-day AI assistance, read our blog where we post regular updates from the world of artificial intelligence and other useful insights.

It seems we need to build in some kind of "Fail Safe" that can prevent the accumulation of negative changes before an AI system can become a real and true danger to mankind or any natural system that already exists. As I write this, I realize the impossibility of being able to do so. It's "Lift Off" and good luck to us allA

Yes, you're absolutely right. Even though AI brings substantial benefits, it can also bring dangers. We definitely need more control and regulations for this technology.