GitLab, the developer platform, has introduced a new security feature driven by artificial intelligence (AI) that uses a large language model to alert developers to potential vulnerabilities. The platform plans to extend the functionality in the future to include automatically resolving these vulnerabilities utilizing AI. The feature named "explain this vulnerability", assists teams identify the best way to fix a vulnerability in the context of the code base. This is achieved by combining general information about the vulnerability with certain insights from the user's code, making it quicker and easier to repair problems.

The company's general idea behind adding AI functions is to enable "velocity with guardrails". This means mixing AI code and test generation supported by the company's full-stack DevSecOps platform to guarantee that everything the AI generates can be deployed safely. GitLab emphasizes that all of its AI features are created according to privacy. The organization just sends customers' intellectual property, which is their code, to a model that is within GitLab's cloud concept. GitLab will not utilize its customers' private data to train its models.

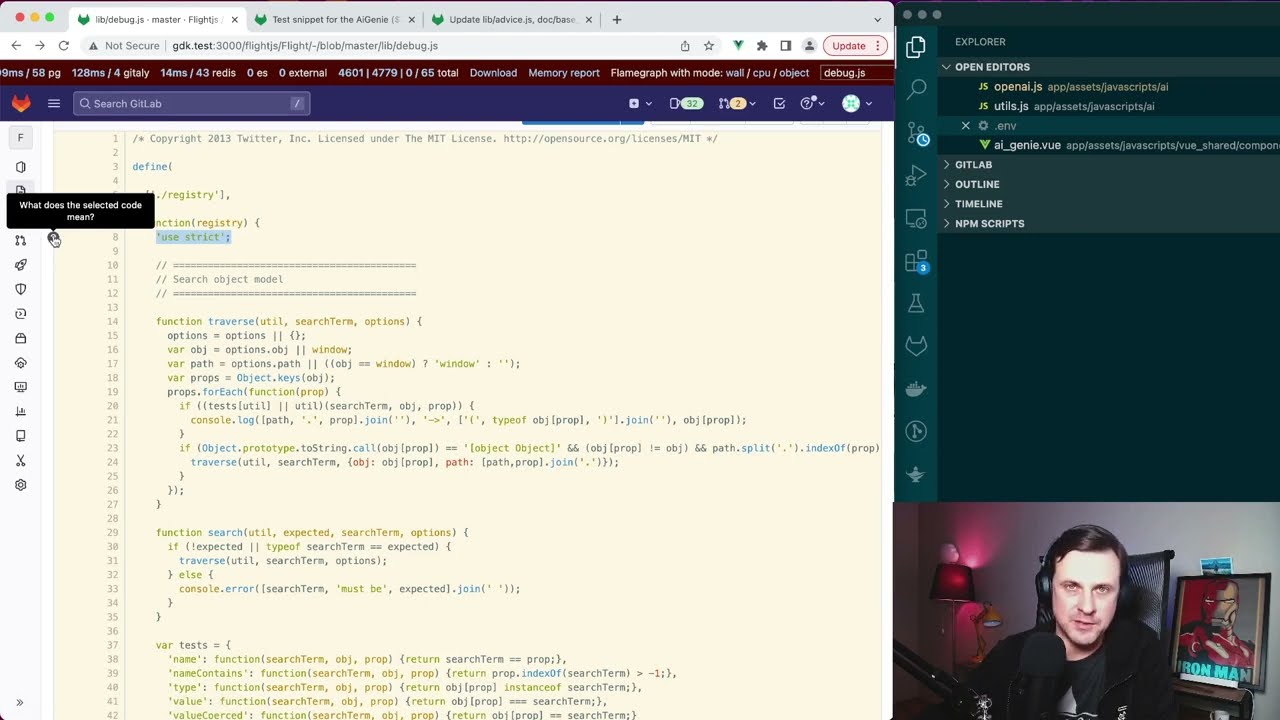

The "explain this code" function has been found to be useful for all developers and quality assurance and security teams, who now have a better comprehension of what they should try. GitLab's ultimate goal is to help these teams produce unit tests and security reviews automatically, which will be added to the general GitLab platform. GitLab's Chief Product Officer, David DeSanto, said that the company's purpose for its AI initiative is to boost effectiveness by 10 times. This efficiency applies not only to certain engineers but to the whole development lifecycle.

GitLab's latest DevSecOps report shows that 65% of programmers are already utilizing AI and machine learning (ML) in their testing efforts or have intentions to do so during the next three years. In today's report, Dave Steer writes “Our DevSecOps Platform helps teams fill critical gaps while automatically enforcing policies, applying compliance frameworks, performing security tests using GitLab’s automation capabilities, and providing AI-assisted recommendations – which frees up resources.”

In conclusion, GitLab’s new security function can really improve the professional environment of any organization due to its AI potential. Data and policy are important and such a solution can take firms to a new level without safety reduction.

You can read about Qualcomm solutions to boost the IoT ecosystem.

Also, we propose you survey our previous news such as 5G IoT connections that will exceed 100 million by 2026.

Besides, you can read about GitHab’s Blueprint for viral Open Source Program Offices.