IBM Research has just unveiled a groundbreaking development poised to reshape the landscape of artificial intelligence (AI) technology. Their latest revelation comes in the form of an analog chip that holds the potential to revolutionize AI inference tasks, boasting GPU-level performance while drastically enhancing power efficiency. While GPUs have long been the go-to for AI processing, their energy-intensive nature often translates to unwarranted financial burdens.

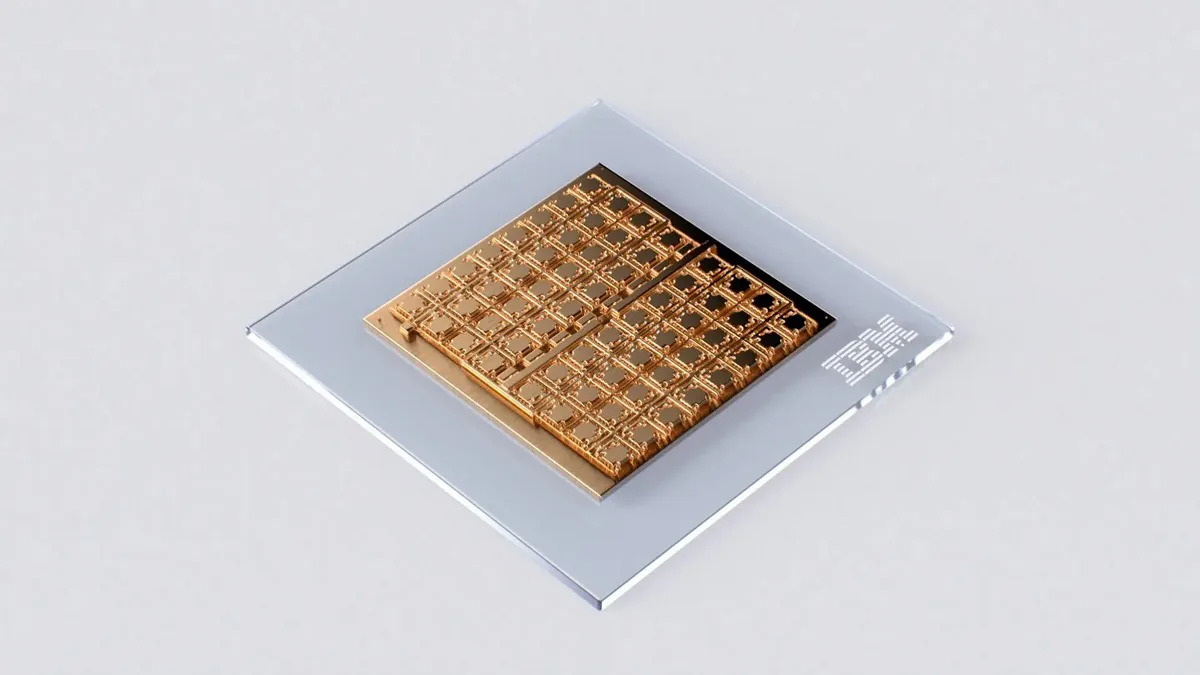

At the heart of this innovation lies an analog AI chip that remains in the throes of development. The chip's standout feature lies in its ability to seamlessly handle both computation and memory storage within a single location. This ingenious design takes inspiration from the intricate workings of the human brain, culminating in a marked improvement in power efficiency. This is a striking departure from the norm, where data shuffling between memory and processing units hamper computational speed and elevate energy consumption.

In a series of internal trials, the nascent chip proved its mettle by achieving an astounding accuracy rate of 92.81 percent when pitted against the CIFAR-10 image dataset. This evaluation, gauging the compute precision of analog in-memory computing, firmly places IBM's creation on par with any existing chip harnessing similar cutting-edge technology. Notably, the energy consumption during testing stood at an impressive 1.51 microjoules of energy per input, underlining its commendable energy efficiency.

Published recently in the esteemed journal Nature Electronics, the accompanying research paper delves deeper into the intricate architecture of the chip. Fabricated using the 14 nm complementary metal-oxide-semiconductor (CMOS) technology, the chip boasts a roster of 64 analog in-memory compute cores, often referred to as "tiles." Each core boasts a formidable 256-by-256 crossbar array of synaptic unit cells, enabling it to emulate computations akin to those executed by layers within deep neural network (DNN) models. To further augment its capabilities, the chip incorporates a global digital processing unit, adept at tackling intricate operations crucial for certain neural networks.

Undoubtedly, IBM's innovation is a captivating stride forward, particularly given the escalating power demands synonymous with AI processing systems. Disturbing reports point to AI inferencing racks gulping down up to ten times the power consumed by their standard server rack counterparts. This precipitous surge not only translates to augmented AI processing expenditures but also raises valid environmental concerns. In such a climate, any strides toward efficiency are poised to be warmly embraced by the industry at large.

Furthermore, the chip's emergence bears a silver lining for the gaming community. A specialized and power-efficient AI chip could potentially alleviate the reliance on GPUs, hinting at potential price reductions that would undoubtedly be hailed by gamers. However, such optimism remains in the realm of speculation, given the chip's ongoing development stage. The timeline for its commercial debut remains an enigma, and until that moment crystallizes, GPUs will continue to reign supreme in AI processing. Consequently, the prospect of their becoming more economically accessible in the immediate future remains uncertain.